AI Trends in Financial Services: 93% Use AI, But Trust Remains an Issue

Financial services firms have moved decisively from experimenting with AI to deploying it to create a durable advantage. What was once optional is now assumed—and the conversation has shifted from whether to use AI to how teams can use it to stay competitive.

Our survey of more than 500 finance professionals shows that AI adoption is already mainstream, with 93% reporting they use or evaluate AI in some capacity. Rather than viewing AI as a threat to their roles, finance leaders see it as an opportunity to redirect their attention toward higher-judgment tasks. To do so effectively, they're prioritizing trust, integration, and infrastructure as the real differentiators shaping the future of AI in financial services.

Key Takeaways

- AI adoption is already widespread, with 93% of surveyed finance professionals reporting using or evaluating AI in some capacity.

- Only 25% say AI is fully integrated across their team or firm, creating an opportunity for early movers to build a durable advantage with purpose-built platforms.

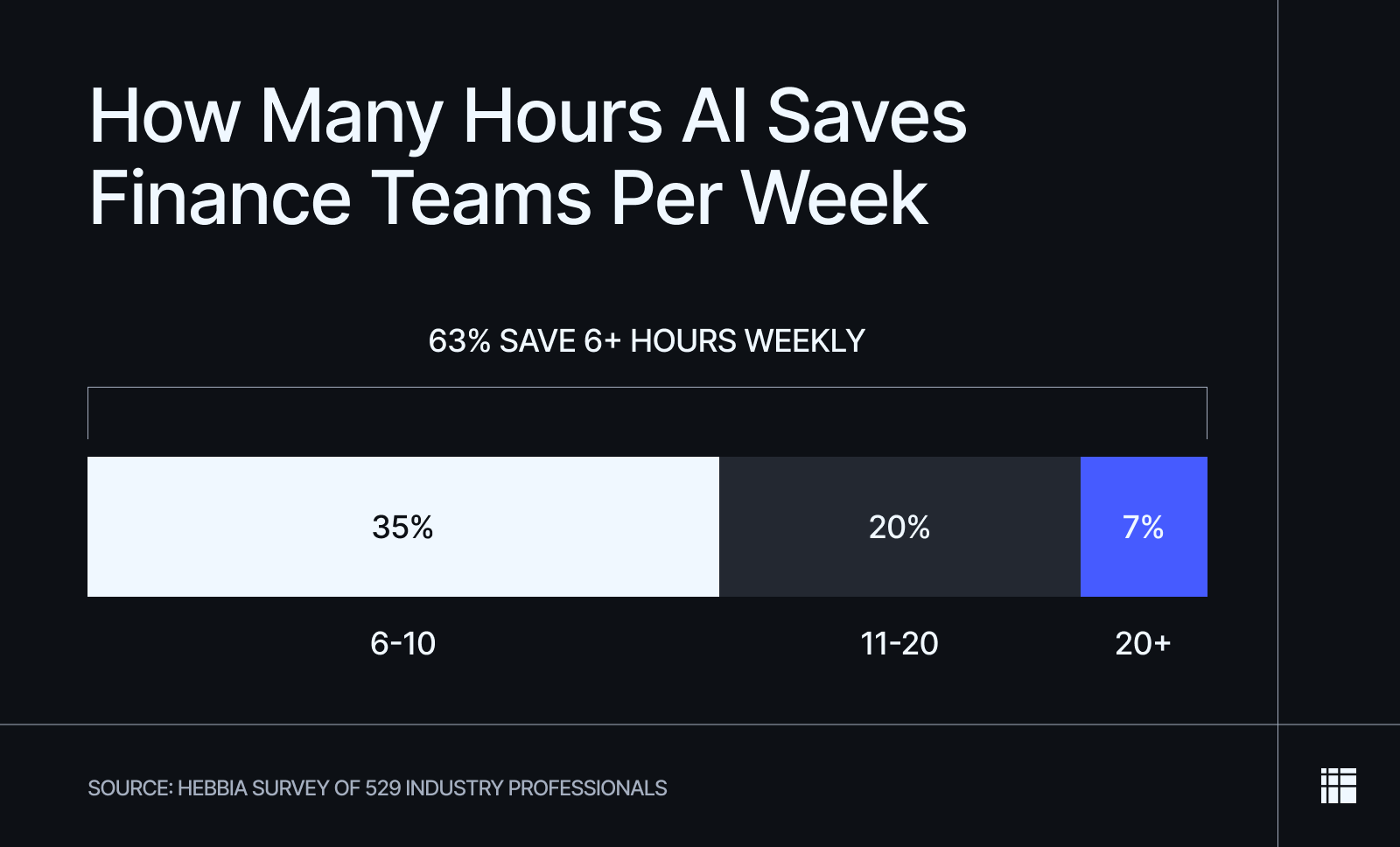

- AI is materially accelerating workflows, with 63% of respondents reporting saving more than six hours per week using AI, including 27% who save more than 10 hours weekly on research and analysis tasks.

- Only 8% of respondents expect AI to replace their role outright, while 26% say AI will take over routine workflows, shifting their role toward higher-level judgment.

- Data volume and fragmentation remain the biggest bottlenecks. Among respondents, 46% cite reading massive volumes of documents as a primary challenge, another 46% struggle with extracting information from multiple sources, and 38% report difficulty searching across disconnected systems.

Trust and infrastructure—not capability—limit the use of AI at scale. While 85% of respondents are at least somewhat confident in AI’s factual accuracy, survey participants pointed to security (29%) and system integration (21%) as the top barriers preventing firm-wide deployment.

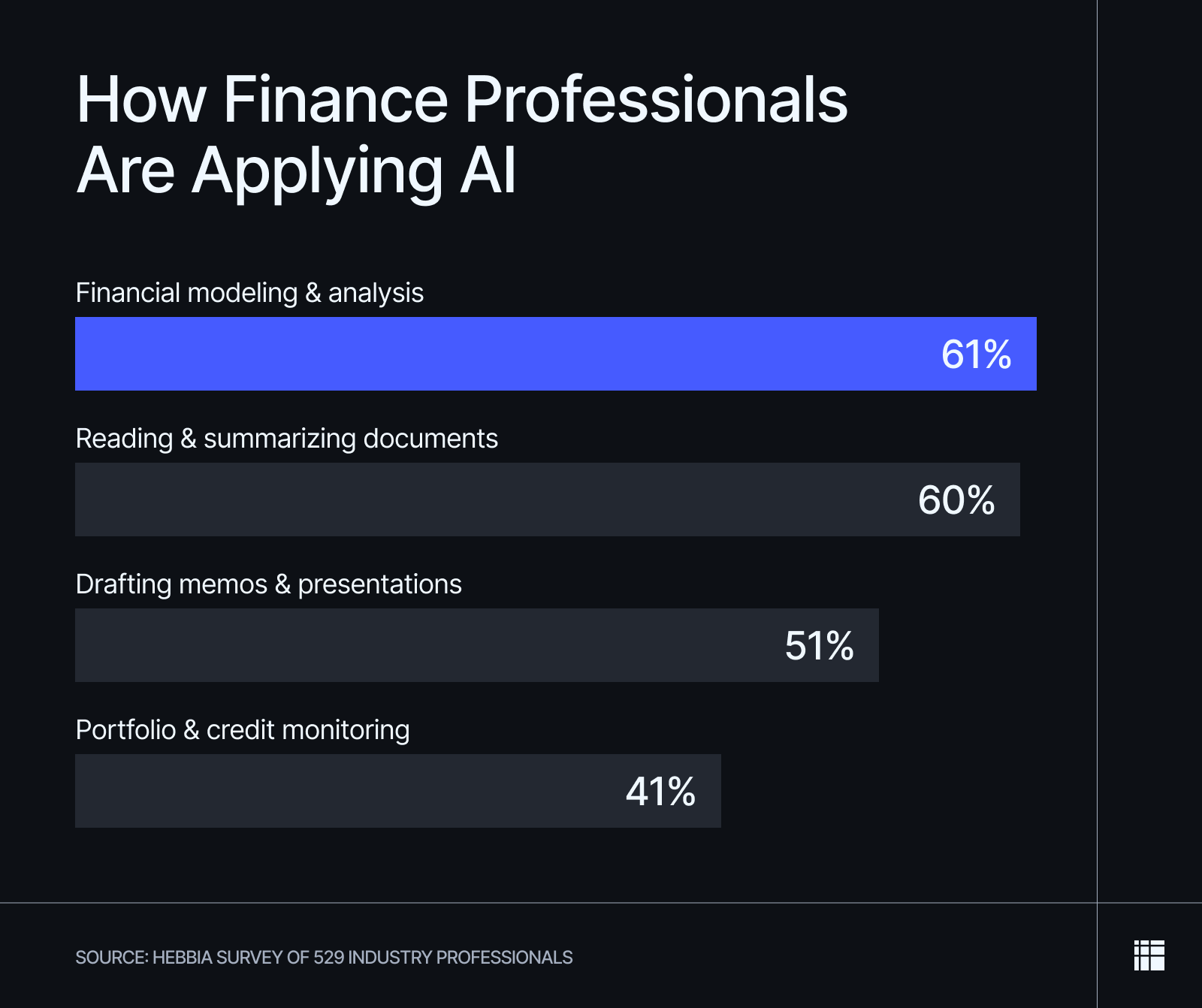

Financial Modeling and Document Analysis Are the Top AI Use Cases

Finance teams have moved past experimentation and are applying AI directly to core analytical workflows. The most common AI use cases today are central to institutional finance work.

These dynamics help explain why AI due diligence has become a priority for deal teams, and why many investment banks are embedding AI directly into deal execution workflows. As AI moves deeper into research and execution, its value shifts from offering a source of convenience to driving faster speed to signal, enabling teams to quickly surface insights and build conviction.

AI Is Already Saving Finance Teams 6+ Hours per Week

AI’s impact on research productivity is no longer theoretical. Nearly 63% of respondents report saving at least six hours per week using AI, with only a small minority reporting no meaningful time savings. These gains translate directly into faster screening, tighter diligence timelines, and earlier conviction in live opportunities. In competitive deal environments, those reclaimed hours compound quickly, increasing throughput without sacrificing rigor.

External benchmarks confirm this shift. Large investment banks report that internal AI systems complete research tasks in minutes that previously took days, while private-market teams use AI to compress diligence cycles significantly. For leveraged finance and credit teams, the upside increasingly depends on whether AI supports a reliable, repeatable path from diligence to deal.

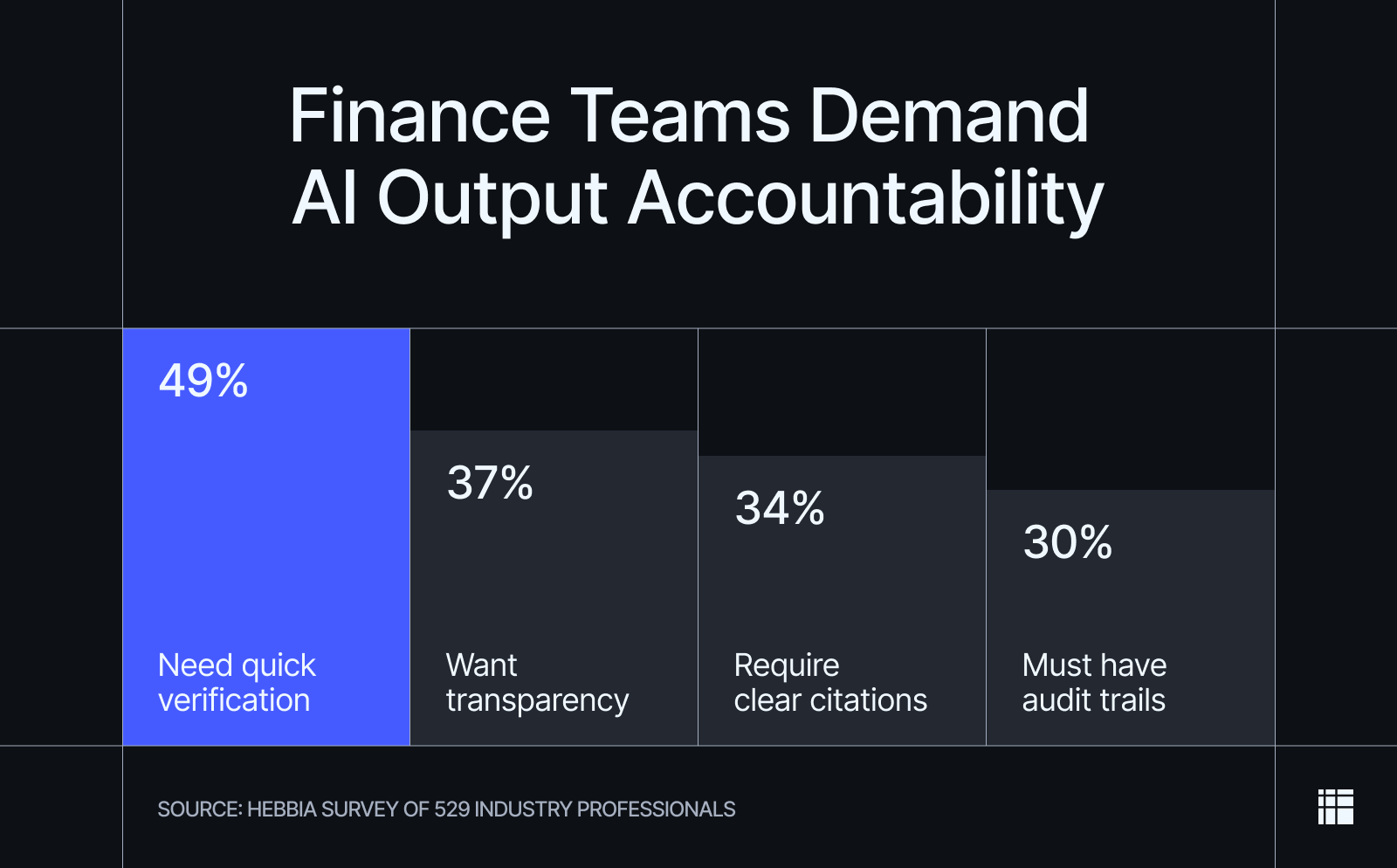

Professionals Demand Verifiable, Transparent AI-Assisted Research

Despite the widespread adoption of AI, research workflows remain constrained by unmet requirements for trust and transparency. While 85% of respondents report being at least somewhat confident in AI’s factual accuracy when its answers are grounded in source documents, that confidence drops sharply when users can’t easily verify its outputs.

When asked what they need to trust AI-generated research, respondents consistently pointed to having the control to verify where the answers come from themselves.

A recent McKinsey analysis of gen AI in private markets found that public LLM reports were systematically more optimistic than expert-interview research, diverged on core metrics such as market size and growth, and missed many deal-critical details, including contract structures, unit economics, and regulatory hurdles.

The findings make clear that trust in AI-assisted research is conditional and operational. It depends just as much on citations and traceability as it does on how the system is designed and governed—especially in institutional-finance environments where decisions must withstand scrutiny.

Data Volume & Fragmentation Are the Biggest Bottlenecks

Data fragmentation sits at the center of these trust issues. Respondents consistently point to the following as the most significant workflow bottlenecks today—with or without AI:

- 46% are overwhelmed by the volume of documents and data they need to review.

- 46% struggle to extract information from multiple sources.

- 38% have difficulty searching across disconnected systems and sources.

- 34% face challenges analyzing and interpreting a mix of qualitative and quantitative data.

When research spans siloed systems, retrieval-only approaches begin to break down. Verifying AI-generated answers can take as long as finding the information in the first place, particularly when outputs cannot be traced cleanly back to source material or reconciled across formats. At this scale, accuracy alone is insufficient without context, lineage, and defensibility.

These constraints help explain why finance teams are reassessing financial research automation strategies and looking beyond traditional RAG (retrieval-augmented generation) systems. As data volume and complexity increase, teams are prioritizing platforms that can handle private data at an institutional scale without sacrificing verification, transparency, or auditability.

Security and Integration Are the Top Barriers to Scaling AI in Finance

There’s still a wide gap between individual and firm-wide deployment of AI. While finance professionals actively use AI in day-to-day work, respondents cite several organizational barriers that prevent AI from scaling across the entire company:

- 29% cite data security and confidentiality concerns.

- 21% point to integration challenges with existing systems and data sources.

- 16% cite hallucinations and accuracy risks.

- 11% point to a lack of internal training or expertise.

These barriers underscore that AI adoption is no longer limited by human interest or experimentation, but by corporate infrastructure, integration, and organizational readiness. Legacy systems and fragmented data environments often prevent AI from operating across the full set of documents, models, and workflows required at the enterprise level. As a result, AI remains confined to individual use cases rather than scaling into firm-wide research infrastructure.

These constraints indicate a clear market need. As firms look to move beyond experimentation and to deploy AI firm-wide, demand is increasingly focused on research infrastructure designed for secure, compliant operations at scale. That means platforms designed to support auditability, governance, and rigorous data controls.

Built for Institutional-Grade Security

Hebbia follows Zero Data Retention (ZDR), meaning customer data is never stored, reused, or used to train models. This security-first approach is designed to meet the strict confidentiality, governance, and compliance requirements of institutional finance teams.

Finance Jobs Are Being Redefined, Not Replaced

While concerns about AI-driven job displacement persist, the data points in a different direction. Only 8% of respondents expect AI to replace their role outright, while 26% say AI will take over routine workflows, shifting their role toward higher‑level judgment and decision-making. Rather than eliminating roles, AI is reshaping how work gets done—moving professionals up the value chain toward higher-level decision-making.

That reframing is evident in how respondents describe AI’s role today. Nearly one-third (32%) view AI as more of a helper than a game-changer, reinforcing that human accountability remains central even as workflows accelerate. In practice, AI handles repetitive or time-intensive tasks, while humans retain responsibility for offering interpretations, doing risk assessment, and making final decisions.

Outside institutional finance, the same pattern holds. In wealth management, client trust remains with human advisors, but their preference is increasingly shifting toward those who actively use AI—especially among younger investors. The data indicates that human judgment remains essential, but AI-augmented expertise is increasingly preferred. In the future, human finance professionals are not replaced. But they are faster, more strategic, and more accountable.

Hebbia Empowers Teams to Embrace the Future of Financial Research

The conclusion is clear: AI adoption among finance teams is already widespread, and productivity gains from it are real. Yet trust, integration, and infrastructure determine who captures a lasting edge from the technology. As AI investment across financial services continues to grow, differentiation will depend on whether teams adopt AI-native systems that can handle private data and institutional scrutiny.

The industry is moving toward more wide-scale adoption of AI based on the success of early experiments. Hebbia is designed for this environment. By enabling deep reasoning across private documents, our platform delivers verifiable, source-linked outputs suitable for real decision-making at scale. It allows teams to utilize AI’s full potential with ease, speed, and confidence.

Methodology

This report is based on an online survey of 529 respondents, conducted via Centiment in December 2025. Respondents were deal- and investment-focused finance professionals working at investment banks, asset managers, and other advisors to the industry.

Participants represented a range of seniority levels and firm sizes, from small teams to global organizations. The survey covered AI usage in research and analysis workflows, time savings, confidence in AI outputs, and barriers to broader adoption. Percentages are calculated based on total respondents, and some questions allowed multiple selections. The data is unweighted, and the margin of error is approximately +/-3% for the overall sample with a 95% confidence level.